Overview

Abstract

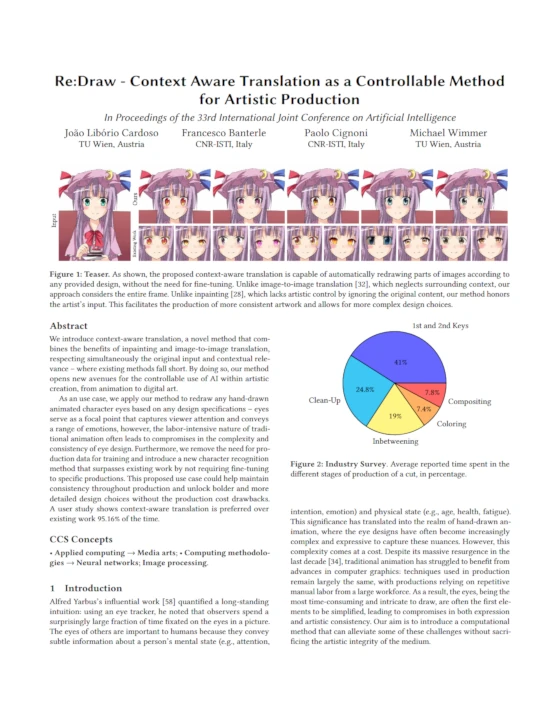

We introduce context-aware translation, a novel method that combines the benefits of inpainting and image-to-image translation, respecting simultaneously the original input and contextual relevance — where existing methods fall short. By doing so, our method opens new avenues for the controllable use of AI within artistic creation, from animation to digital art.

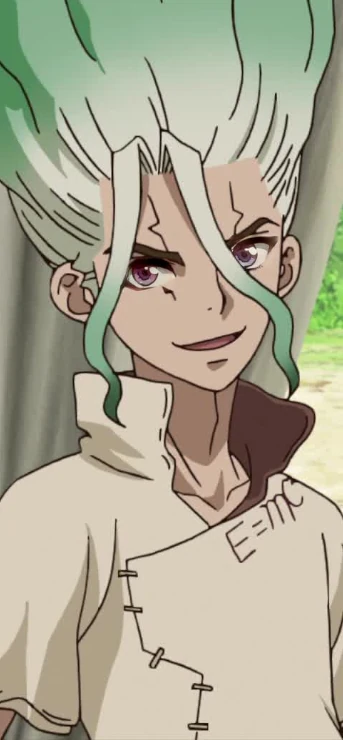

As a use case, we apply our method to redraw any hand-drawn animated character eyes based on any design specifications. Eyes serve as a focal point that captures viewer attention and conveys a range of emotions; however, the labor-intensive nature of traditional animation often leads to compromises in the complexity and consistency of eye design. Furthermore, we remove the need for production data for training and introduce a new character recognition method that surpasses existing work by not requiring fine-tuning to specific productions.

This proposed use case could help maintain consistency throughout production and unlock bolder and more detailed design choices without the production cost drawbacks. A user study shows context-aware translation is preferred over existing work 95.16% of the time.

Overview

Paper Resources

@inproceedings{ijcai2024p842,

title = {Re:Draw - Context Aware Translation as a Controllable Method for Artistic Production},

author = {Cardoso, João Libório and Banterle, Francesco and Cignoni, Paolo and Wimmer, Michael},

booktitle = {Proceedings of the Thirty-Third International Joint Conference on

Artificial Intelligence, {IJCAI-24}},

publisher = {International Joint Conferences on Artificial Intelligence Organization},

editor = {Kate Larson},

pages = {7609--7617},

year = {2024},

month = {8},

Click to copy

Media

Videos

Explainer

Robustness Test

Media

Results Sample

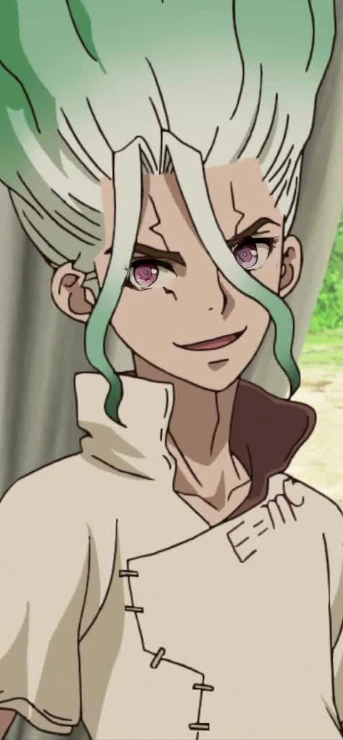

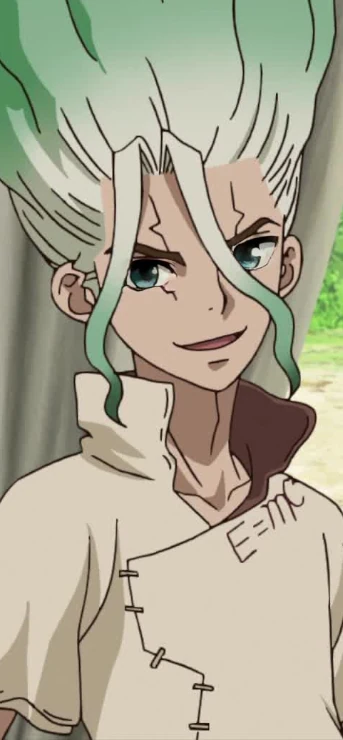

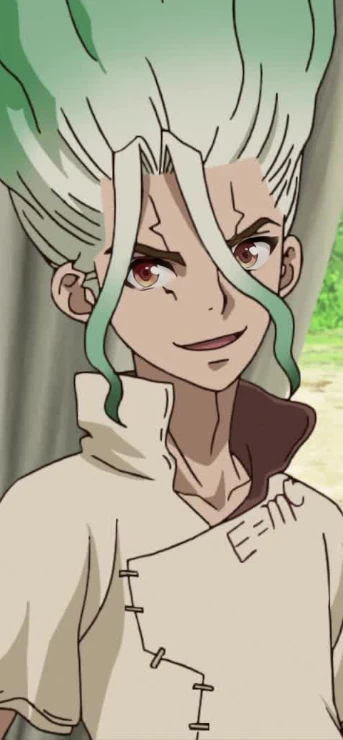

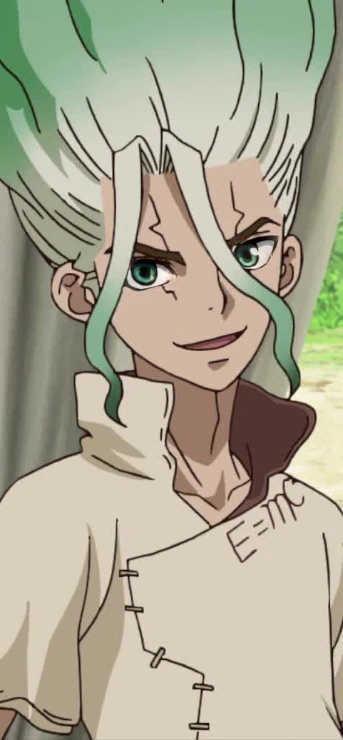

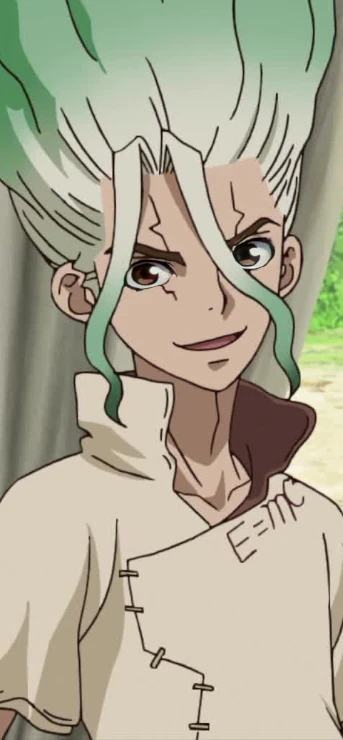

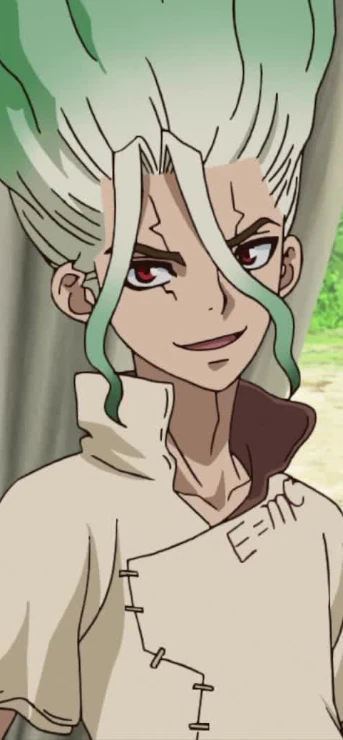

Our method can be used to increase the amount of detail in characters' eyes. Note that a higher amount of detail doesn't necessarily translate to better or more appealing artwork — simplified designs are sometimes a stylistic choice. Our method opens the option to have higher detail if so desired, without increasing production time.

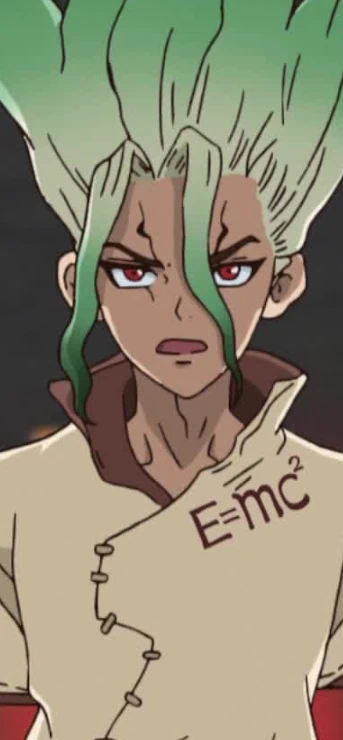

Examples from a diverse set of anime series, including Kobayashi's Dragon Maid, The Ancient Magus Bride, Idolmaster: Xenoglossia, Dr. Stone, Eden of the East, Darling in the Franxx and Wotakoi:

A side-effect of how our proposed networks are trained is that our method is also capable of applying entirely different designs to characters. While not the focus of our work, it demonstrates the generalizability of our method and the robustness of the learned models:

In these examples, we demonstrate robustness across challenging scenarios including atypical facial proportions, high-resolution imagery, oblique camera angles, head tilts, irregular lighting, and occlusions caused by hair:

Dragon Maid

Ancient Magus Bride

Angels of Death

Re:Zero

Dr. Stone

Wotakoi

Credits