Overview

Abstract

Visual error metrics play a fundamental role in the quantification of perceived image similarity. Most recently, use cases for them in real-time applications have emerged, such as content-adaptive shading and shading reuse to increase performance and improve efficiency. A wide range of different metrics has been established, with the most sophisticated being capable of capturing the perceptual characteristics of the human visual system. However, their complexity, computational expense, and reliance on reference images to compare against prevent their generalized use in real-time, restricting such applications to using only the simplest available metrics.

In this work, we explore the abilities of convolutional neural networks to predict a variety of visual metrics without requiring either reference or rendered images. Specifically, we train and deploy a neural network to estimate the visual error resulting from reusing shading or using reduced shading rates. The resulting models account for 70%–90% of the variance while achieving up to an order of magnitude faster computation times. Our solution combines image-space information that is readily available in most state-of-the-art deferred shading pipelines with reprojection from previous frames to enable an adequate estimate of visual errors, even in previously unseen regions. We describe a suitable convolutional network architecture and considerations for data preparation for training. We demonstrate the capability of our network to predict complex error metrics at interactive rates in a real-time application that implements content-adaptive shading in a deferred pipeline. Depending on the portion of unseen image regions, our approach can achieve up to 2x performance compared to state-of-the-art methods.

Publication & Citation

Paper Resources

@article{10.1145/3522625,

author = {Cardoso, Joao Liborio and Kerbl, Bernhard and Yang, Lei and Uralsky, Yury and Wimmer, Michael},

title = {Training and Predicting Visual Error for Real-Time Applications},

year = {2022},

issue_date = {May 2022},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {5},

number = {1},

url = {https://doi.org/10.1145/3522625},

Click to copy

Media

I3D Talk

Results

Metric Prediction

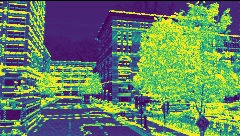

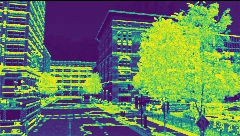

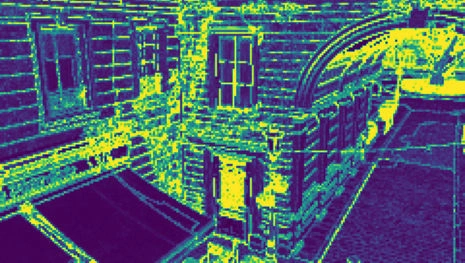

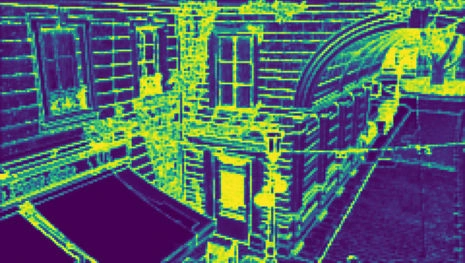

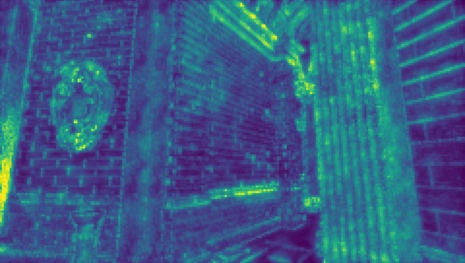

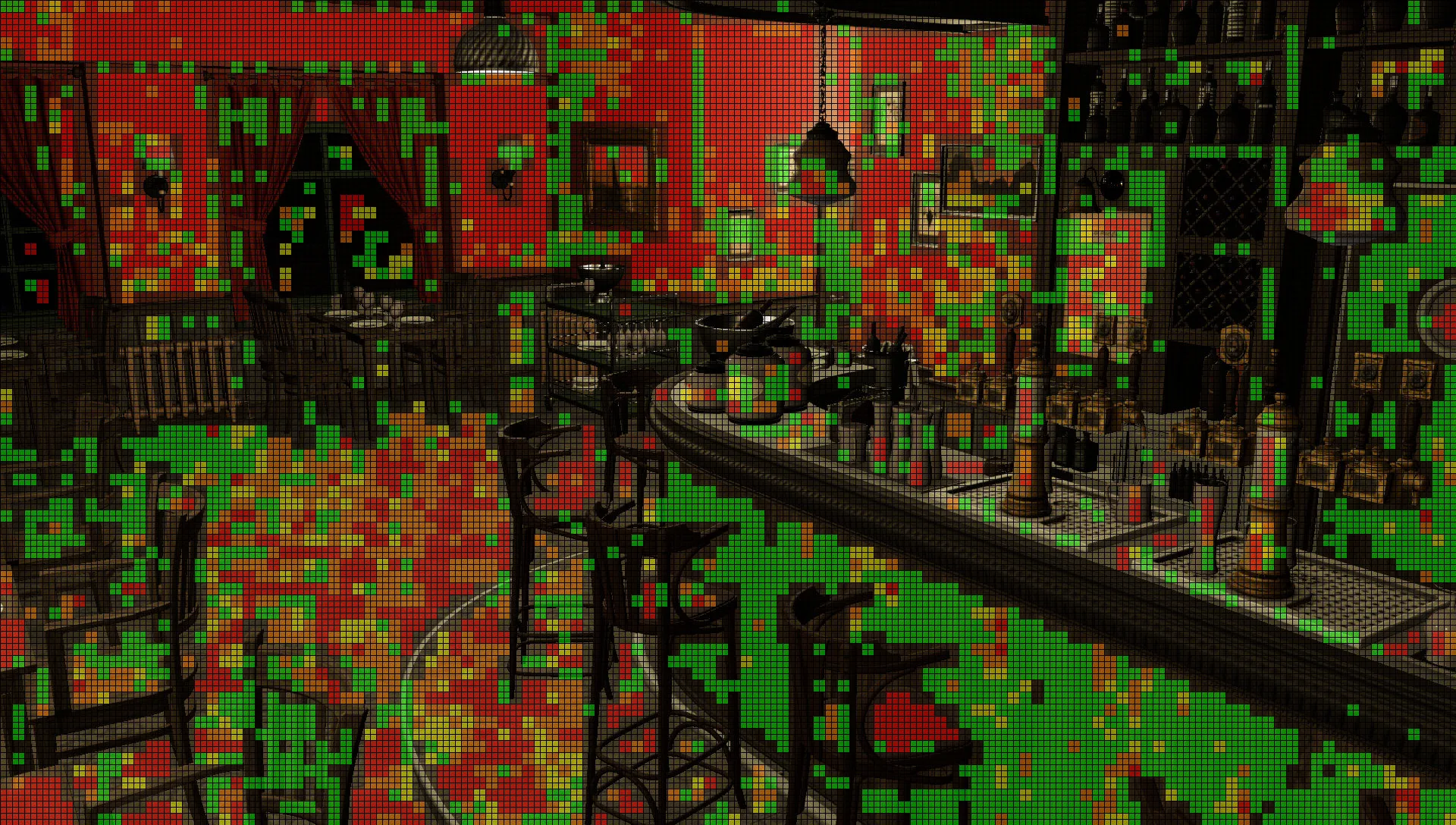

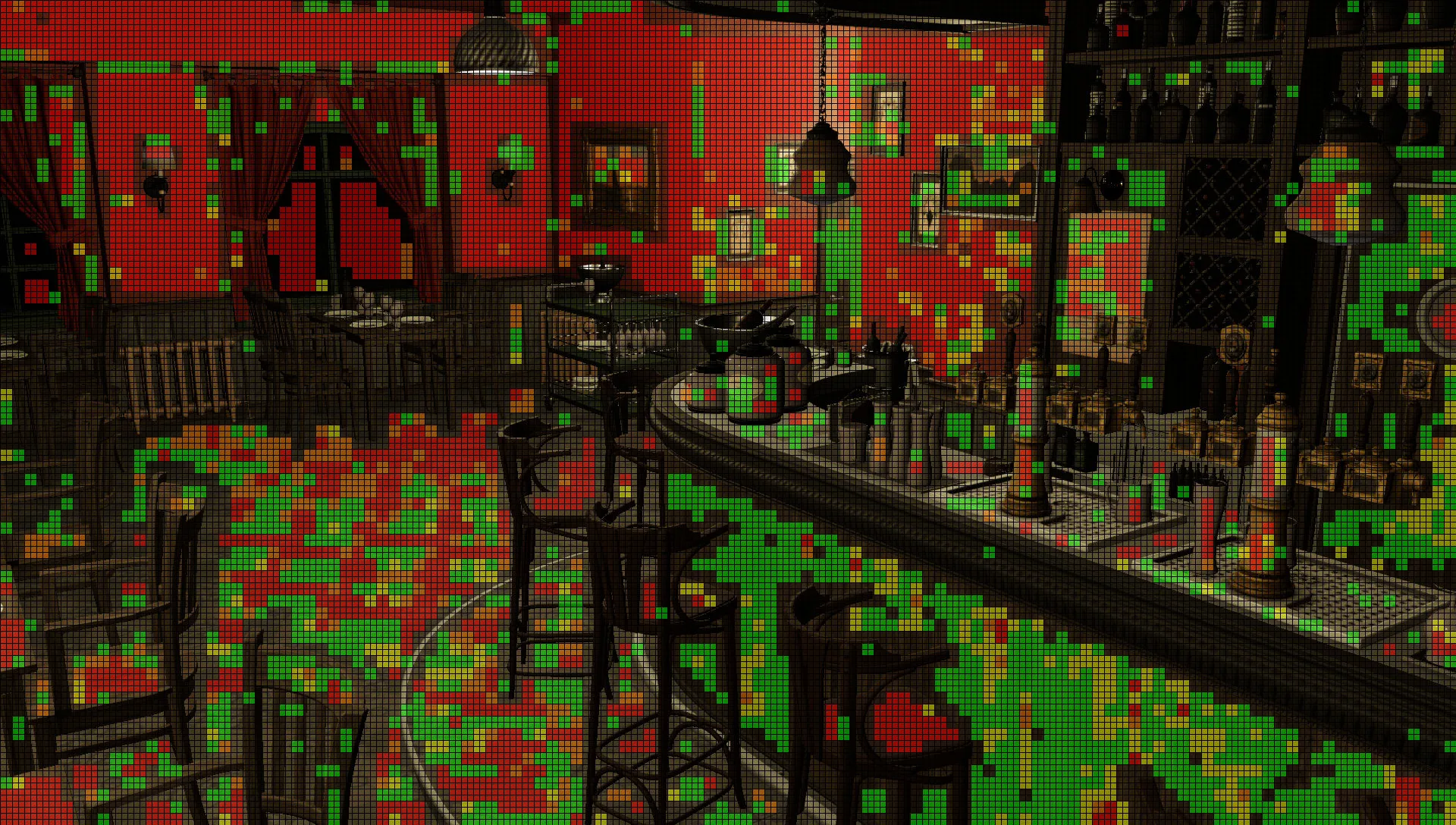

Our trained networks closely predict a variety of perceptual metrics in real-time before rendering, including previously unseen regions. Drag the slider to compare network prediction (left) against ground truth (right):

Predicted

Predicted

Predicted

Predicted

Predicted

Predicted

Predicted

Predicted

Predicted

Predicted

Results

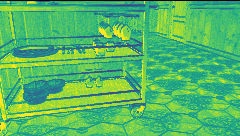

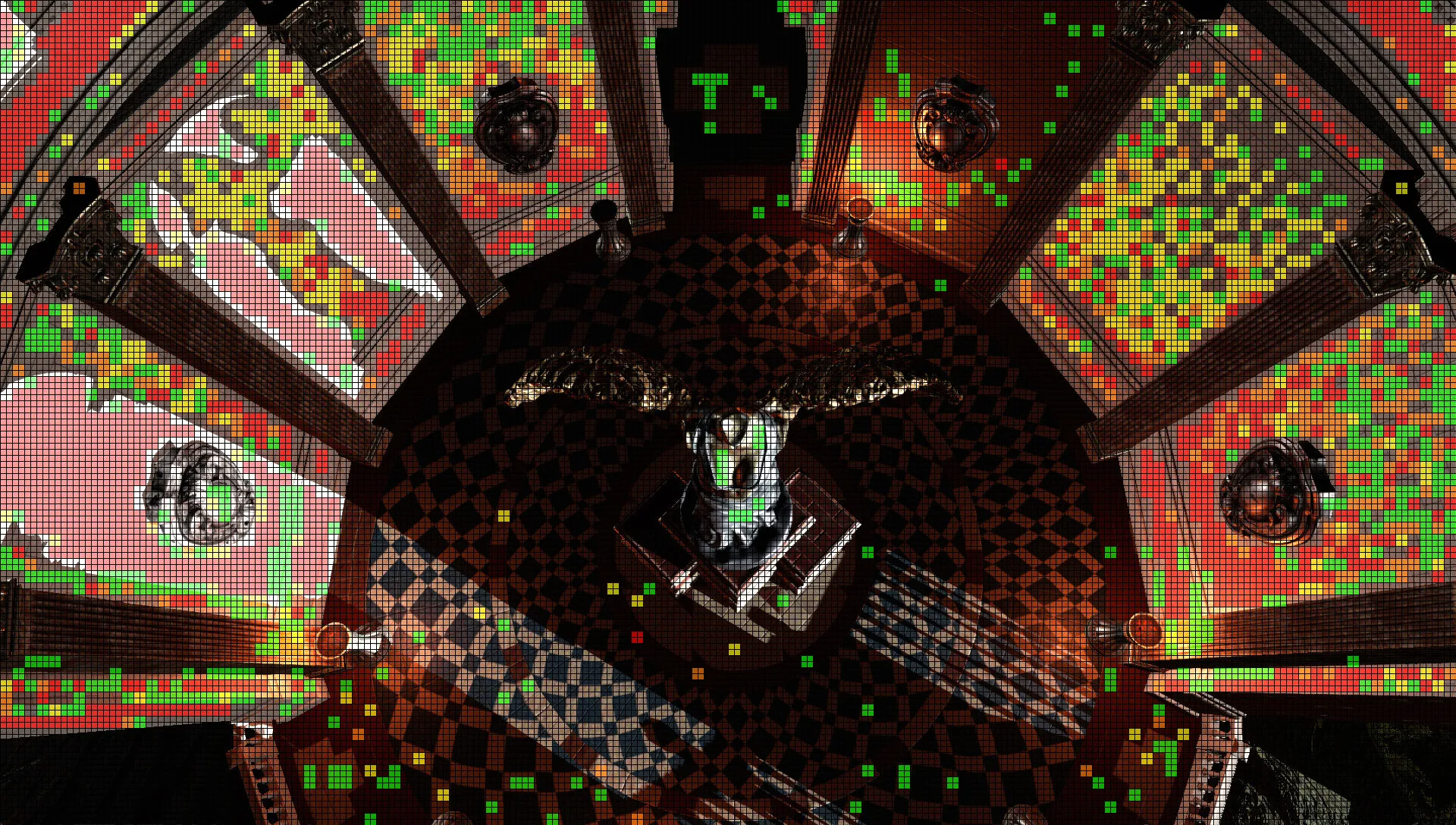

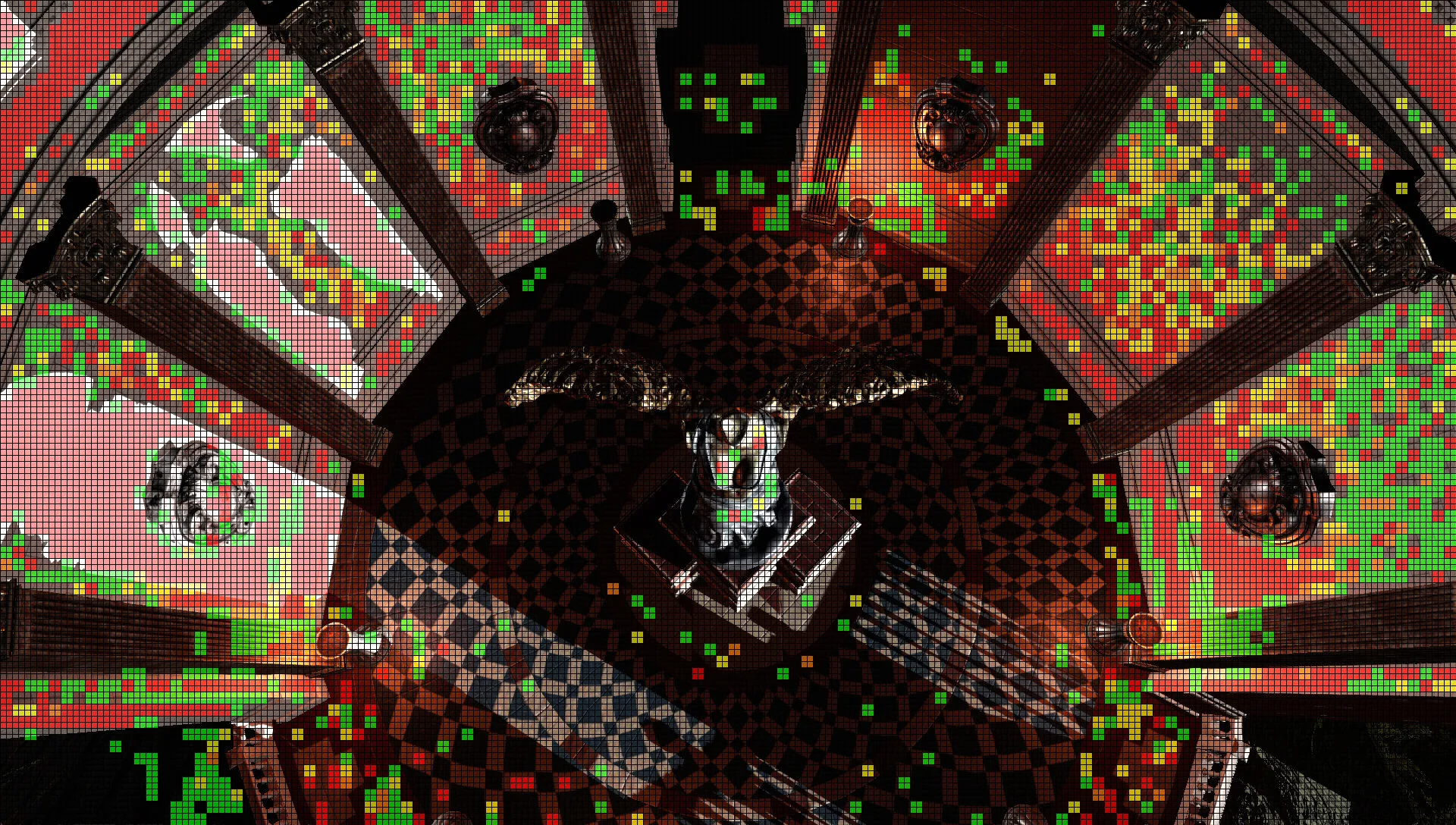

Variable Rate Shading

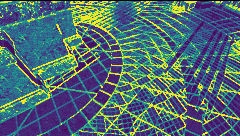

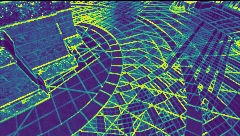

Predictions can be used to optimize rendering. Colors represent shading rates (full◻, fine ◼, medium ◼ and coarse ◼). Drag the slider to compare network prediction (left) against ground truth (right):

Predicted

Predicted

Predicted

Predicted

Credits